Top 5 Vector Databases to Try in 2024

Vector databases, also referred to as vectorized databases or vector stores, constitute a specialized database category crafted for the efficient storage and retrieval of high-dimensional vectors.

In the database context, a vector denotes an organized series of numerical values that signifies a position within a multi-dimensional space. Each component of the vector corresponds to a distinct feature or dimension.

These databases prove particularly adept at handling applications dealing with extensive and intricate datasets, encompassing domains like machine learning, natural language processing, image processing, and similarity search.

Conventional relational databases might encounter challenges when managing high-dimensional data and executing similarity searches with optimal efficiency. Consequently, vector databases emerge as a valuable alternative in such scenarios.

What are the Key Attributes of Vector Databases?

Key attributes of vector databases encompass:

Optimized Vector Storage

Vector databases undergo optimization for the storage and retrieval of high-dimensional vectors, often implementing specialized data structures and algorithms.

Proficient Similarity Search

These databases excel in conducting similarity searches, empowering users to locate vectors in close proximity or similarity to a provided query vector based on predefined metrics such as cosine similarity or Euclidean distance.

Scalability

Vector databases are architecturally designed to scale horizontally, facilitating the effective handling of substantial data volumes and queries by distributing the computational load across multiple nodes.

Support for Embeddings

Frequently employed to store vector embeddings generated by machine learning models, vector databases play a crucial role in representing data within a continuous, dense space. Such embeddings find common applications in tasks like natural language processing and image analysis.

Real-time Processing

Numerous vector databases undergo optimization for real-time or near-real-time processing, rendering them well-suited for applications necessitating prompt responses and low-latency performance.

What is a Vector Database?

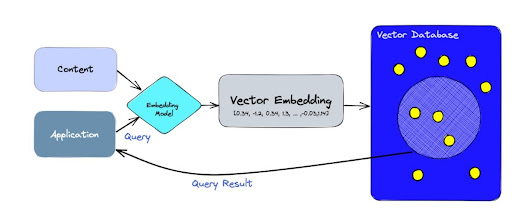

A vector database is a specialized database designed to store data as multi-dimensional vectors representing various attributes or qualities. Each piece of information, like words, pictures, sounds, or videos, turns into what is called vectors.

All the information undergoes transformation into these vectors using methods like machine learning models, word embeddings, or feature extraction techniques.

The key advantage of this database lies in its capacity to swiftly and accurately locate and retrieve data based on the proximity or similarity of vectors.

This approach enables searches based on semantic or contextual relevance rather than solely relying on precise matches or specific criteria, as seen in traditional databases.

So, let’s say you’re looking for something. With a vector database, you can:

- Find songs that feel similar in their tune or rhythm.

- Discover articles that talk about similar ideas or themes.

- Spot gadgets that seem similar based on their characteristics and reviews.

How do Vector Databases Work?

Imagine traditional databases as tables that neatly store simple things like words or numbers.

Now, think of vector databases as super smart systems handling complex information known as vectors using unique search methods.

Unlike regular databases that hunt for exact matches, vector databases take a different approach. They’re all about finding the closest match using special measures of similarity.

These databases rely on a fascinating search technique called Approximate Nearest Neighbor (ANN) search.

Now, the secret sauce behind how these databases work lies in something called “embeddings.”

Picture unstructured data like text, images, or audio – it doesn’t fit neatly into tables.

So, to make sense of this data in AI or machine learning, it gets transformed into number-based representations using embeddings.

Special neural networks do the heavy lifting for this embedding process. For instance, word embeddings convert words into vectors in a way that similar words end up closer together in the vector space.

This transformation acts as a magic translator, allowing algorithms to understand connections and likenesses between different items.

So, think of embeddings as a sort of translator that turns non-number-based data into a language that machine learning models can understand.

This transformation helps these models spot patterns and links in the data more efficiently.

What are the Best Vector Databases for 2024?

We’ve prepared a list of the top 5 vector databases for 2024:

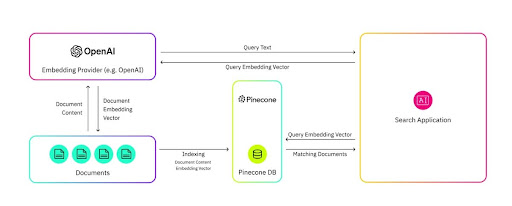

1. Pinecone

First things first, pinecone is not open-sourced.

It is a cloud-based vector database managed by users via a simple API, requiring no infrastructure setup.

Pinecone allows users to initiate, manage, and enhance their AI solutions without the hassle of handling infrastructure maintenance, monitoring services, or fixing algorithm issues.

This solution swiftly processes data and allows users to employ metadata filters and support for sparse-dense indexes, ensuring precise and rapid outcomes across various search requirements.

Its key features include:

- Identifying duplicate entries.

- Tracking rankings.

- Conducting data searches.

- Classifying data.

- Eliminating duplicate entries.

For additional insights into Pinecone, explore the tutorial “Mastering Vector Databases with Pinecone” by Moez Ali available on Data Camp.

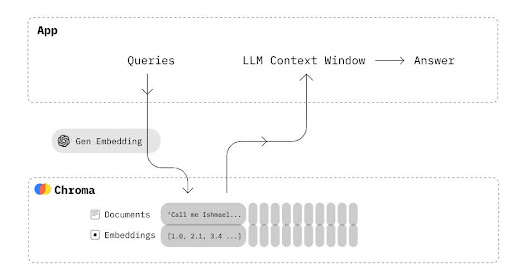

2. Chroma

Chroma is an open-source embedding database designed to simplify the development of LLM (Large Language Model) applications.

Its core focus lies in enabling easy integration of knowledge, facts, and skills for LLMs.

Our exploration into Chroma DB highlights its capability to effortlessly handle text documents, transform text into embeddings, and conduct similarity searches.

Key features:

- Equipped with various functionalities such as queries, filtering, density estimates, and more.

- Support for LangChain (Python and JavaScript) and LlamaIndex.

- Utilizes the same API that operates in Python notebooks and scales up efficiently to the production cluster

Read More: What is RAG API Framework and LLMs?

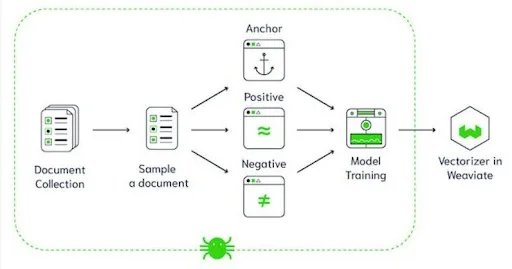

3. Weaviate

Unlike Pinecone, Weaviate is an open-source vector database that simplifies storing data objects and vector embeddings from your preferred ML models.

This versatile tool seamlessly scales to manage billions of data objects without hassle.

It swiftly performs a 10-NN (10-Nearest Neighbors) search within milliseconds across millions of items.

Engineers find it useful for data vectorization during import or supplying their vectors, and crafting systems for tasks like question-and-answer extraction, summarization, and categorization.

Key features:

- Integrated modules for AI-driven searches, Q&A functionality, merging LLMs with your data, and automated categorization.

- Comprehensive CRUD (Create, Read, Update, Delete) capabilities.

- Cloud-native, distributed, capable of scaling with evolving workloads, and compatible with Kubernetes for seamless operation.

- Facilitates smooth transitioning of ML models to MLOps using this database.

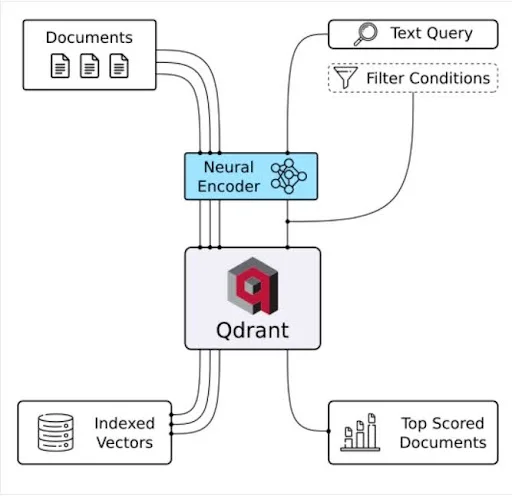

4. Qdrant

Qdrant serves as a vector database, serving the purpose of conducting vector similarity searches with ease.

It operates through an API service, facilitating searches for the most closely related high-dimensional vectors.

Utilizing Qdrant enables the transformation of embeddings or neural network encoders into robust applications for various tasks like matching, searching, and providing recommendations. Some key features of Qdrant include:

- Flexible API: Provides OpenAPI v3 specs along with pre-built clients for multiple programming languages.

- Speed and accuracy: Implements a custom HNSW algorithm for swift and precise searches.

- Advanced filtering: Allows filtering of results based on associated vector payloads, enhancing result accuracy.

- Diverse data support: Accommodates diverse data types, including string matching, numerical ranges, geo-locations, and more.

- Scalability: Cloud-native design with capabilities for horizontal scaling to handle increasing data loads.

- Efficiency: Developed in Rust, optimizing resource usage through dynamic query planning for enhanced efficiency.

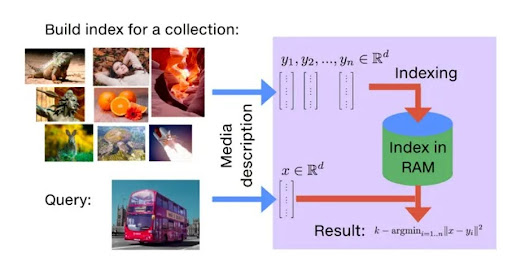

5. Faiss

Open source: Yes

GitHub stars: 23k

Developed by Facebook AI Research, Faiss stands as an open-source library solving the challenge of fast, dense vector similarity searches and grouping.

It provides methods for searching through sets of vectors of varying sizes, including those that may surpass RAM capacities.

Faiss also offers evaluation code and parameter adjustment support.

Key features:

- Retrieves not only the nearest neighbor but also the second, third, and k-th nearest neighbors.

- Enables the search of multiple vectors simultaneously, not restricted to just one.

- Utilizes the greatest inner product search instead of minimal search.

- Supports other distances like L1, Linf, etc., albeit to a lesser extent.

- Returns all elements within a specified radius of the query location.

- Provides the option to save the index to disk instead of storing it in RAM.

Faiss serves as a powerful tool for accelerating dense vector similarity searches, offering a range of functionalities and optimizations for efficient and effective search operations.

Wrapping up

In today’s data-driven era, the increasing advancements in artificial intelligence and machine learning highlight the crucial role played by vector databases.

Their exceptional capacity to store, explore, and interpret multi-dimensional data vectors has become integral in fueling a spectrum of AI-powered applications.

From recommendation engines to genomic analysis, these databases stand as fundamental tools, driving innovation and efficacy across various domains.

Frequently asked questions

1. What are the key features I should look out for in vector databases?

When considering a vector database, prioritize features like:

- Efficient search capabilities

- Scalability and performance

- Flexibility in data types

- Advanced filtering options

- API and integration support

2. How do vector databases differ from traditional databases?

Vector databases stand distinct from traditional databases due to their specialized approach to managing and processing data. Here’s how they differ:

- Data structure: Traditional databases organize data in rows and columns, while vector databases focus on storing and handling high-dimensional vectors, particularly suitable for complex data like images, text, and embeddings.

- Search mechanisms: Traditional databases primarily use exact matches or set criteria for searches, whereas vector databases employ similarity-based searches, allowing for more contextually relevant results.

- Specialized functionality: Vector databases offer unique functionalities like nearest-neighbor searches, range searches, and efficient handling of multi-dimensional data, catering to the requirements of AI-driven applications.

- Performance and scalability: Vector databases are optimized for handling high-dimensional data efficiently, enabling faster searches and scalability to handle large volumes of data compared to traditional databases.

Understanding these differences can help in choosing the right type of database depending on the nature of the data and the intended applications.