Meet LLaVA: The New Competitor to GPT-4 Vision

OpenAI’s GPT-4 image recognition technology recently took the tech world by storm. However, even as the dust was settling, a new contender has entered the fray: LLaVA, or the Large Language and Vision Assistant. Open-sourced and absolutely free to use, LLaVA is set to redefine the boundaries of image recognition technology.

What is LLaVA?

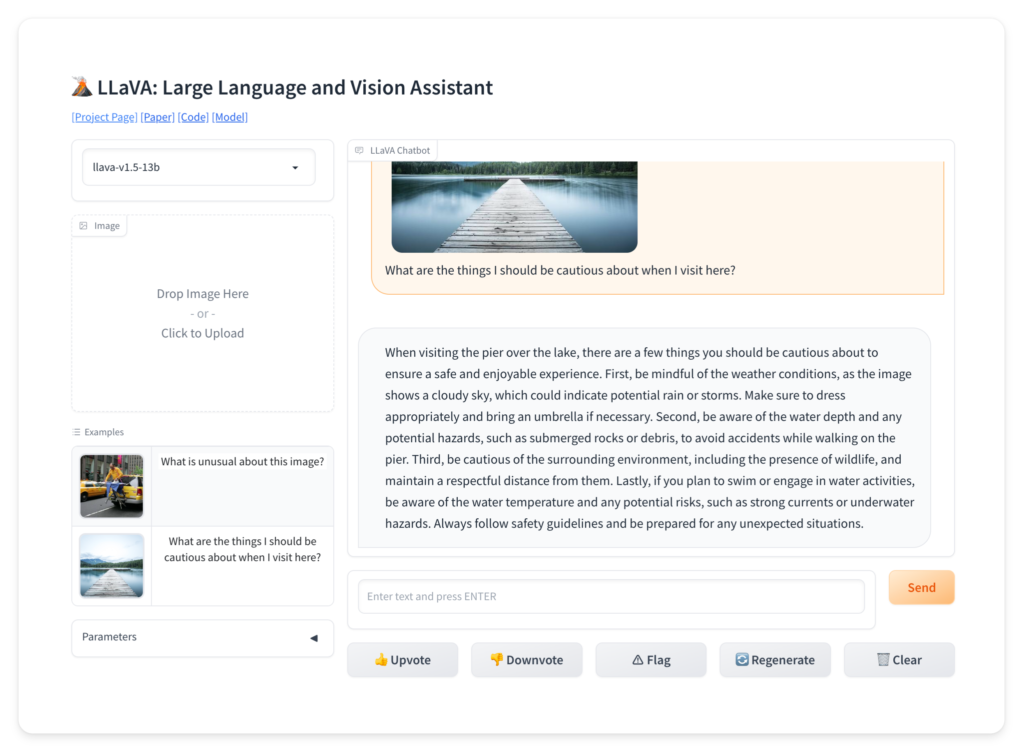

LLaVA is a cutting-edge tool created by experts from University of Wisconsin-Madison, Microsoft Research, and Columbia University. In simple terms, it’s a piece of technology designed to understand both visuals (like photos) and language (like text). Just imagine ChatGPT that can chat about a picture as well as a human can, and that’s LLaVA for you.

Why is LLaVA Special?

LLaVA isn’t just another image recognition tool. It blends a vision “encoder” (think of this as the eyes of the system) with something called Vicuna (its brain for understanding language). This combo makes LLaVA a superstar in chatting about images and understanding complex visual info, just like how GPT-4 Vision does.

Open-Source and Ready to Use

What’s even more exciting? If you’re a tech enthusiast or a developer, you can dive into LLaVA’s inner workings. The creators have kindly shared everything online. From its blueprint (or Paper) to the actual Code and Model, it’s all out there for those curious minds.

In Conclusion

While the image recognition technology landscape is fiercely competitive, LLaVA has undoubtedly carved a niche for itself in a short span. Its remarkable performance, combined with its open-source nature, make it a force to reckon with in the tech world.

The age of image recognition technology is evolving rapidly, and with LLaVA now in the mix, the future looks even more promising. The only question is: Are you ready to be a part of this visual revolution?