DeepSeek R1 is an innovative open-source reasoning model developed by DeepSeek, a Chinese AI company, that’s making waves in the world of artificial intelligence. Unlike traditional language models that focus primarily on text generation and comprehension, DeepSeek R1 specializes in logical inference, mathematical problem-solving, and real-time decision-making. This unique focus sets it apart in the AI landscape, offering enhanced explainability and reasoning capabilities.

What truly distinguishes DeepSeek R1 is its open-source nature, allowing developers and researchers to explore, modify, and deploy the model within certain technical constraints. This openness fosters innovation and collaboration in the AI community. Moreover, DeepSeek R1 stands out for its affordability, with operational costs significantly lower than its competitors. In fact, it’s estimated to cost only 2% of what users would spend on OpenAI’s O1 model, making advanced AI reasoning accessible to a broader audience.

Understanding the DeepSeek R1 Model

At its core, DeepSeek R1 is designed to excel in areas that set it apart from traditional language models. As noted by experts, “Unlike traditional language models, reasoning models like DeepSeek-R1 specialize in: Logical inference, Mathematical problem-solving, Real-time decision-making”. This specialized focus enables DeepSeek R1 to tackle complex problems with a level of reasoning that mimics human cognitive processes.

The journey to create DeepSeek R1 was not without challenges. DeepSeek-R1 evolved from its predecessor, DeepSeek-R1-Zero, which initially relied on pure reinforcement learning, leading to difficulties in readability and mixed-language responses. To overcome these issues, the developers implemented a hybrid approach, combining reinforcement learning with supervised fine-tuning. This innovative method significantly enhanced the model’s coherence and usability, resulting in the powerful and versatile DeepSeek R1 we see today.

Running DeepSeek R1 Locally

While DeepSeek R1’s capabilities are impressive, you might be wondering how to harness its power on your own machine. This is where Ollama comes into play. Ollama is a versatile tool designed for running and managing Large Language Models (LLMs) like DeepSeek R1 on personal computers. What makes Ollama particularly appealing is its compatibility with major operating systems including macOS, Linux, and Windows, making it accessible to a wide range of users.

One of Ollama’s standout features is its support for API usage, including compatibility with the OpenAI API. This means you can seamlessly integrate DeepSeek R1 into your existing projects or applications that are already set up to work with OpenAI models.

To get started with running DeepSeek R1 locally using Ollama, follow these installation instructions for your operating system:

- For macOS:

- Download the installer from the Ollama website

- Install and run the application

- For Linux:

- Use the curl command for quick installation: curl https://ollama.ai/install.sh | sh

- Alternatively, manually install using the .tgz package

- For Windows:

- Download and run the installer from the Ollama website

Once installed, you can start using DeepSeek R1 with simple commands. Check your Ollama version with ollama -v, download the DeepSeek R1 model using ollama pull deepseek-r1, and run it with ollama run deepseek-r1. With these steps, you’ll be able to leverage the power of DeepSeek R1 right on your personal computer, opening up a world of possibilities for AI-driven reasoning and problem-solving.

DeepSeek R1 Distilled Models

To enhance efficiency while maintaining robust reasoning capabilities, DeepSeek has developed a range of distilled models based on the R1 architecture. These models come in various sizes, catering to different computational needs and hardware configurations. The distillation process allows for more compact models that retain much of the original model’s power, making advanced AI reasoning accessible to a broader range of users and devices.

Qwen-based Models

- DeepSeek-R1-Distill-Qwen-1.5B: Achieves an impressive 83.9% accuracy on the MATH-500 benchmark, though it shows lower performance on coding tasks.

- DeepSeek-R1-Distill-Qwen-7B: Demonstrates strength in mathematical reasoning and factual questions, with moderate coding abilities.

- DeepSeek-R1-Distill-Qwen-14B: Excels in complex mathematical problems but requires improvement in coding tasks.

- DeepSeek-R1-Distill-Qwen-32B: Shows superior performance in multi-step mathematical reasoning and versatility across various tasks, although it’s less optimized for programming specifically.

Llama-based Models

- DeepSeek-R1-Distill-Llama-8B: Performs well in mathematical tasks but has limitations in coding applications.

- DeepSeek-R1-Distill-Llama-70B: Achieves top-tier performance in mathematics and demonstrates competent coding skills, comparable to OpenAI’s o1-mini model

One of the key advantages of these distilled models is their versatility in terms of hardware compatibility. They are designed to run efficiently on a variety of setups, including personal computers with CPUs, GPUs, or Apple Silicon. This flexibility allows users to choose the model size that best fits their available computational resources and specific use case requirements, whether it’s for mathematical problem-solving, coding assistance, or general reasoning tasks.

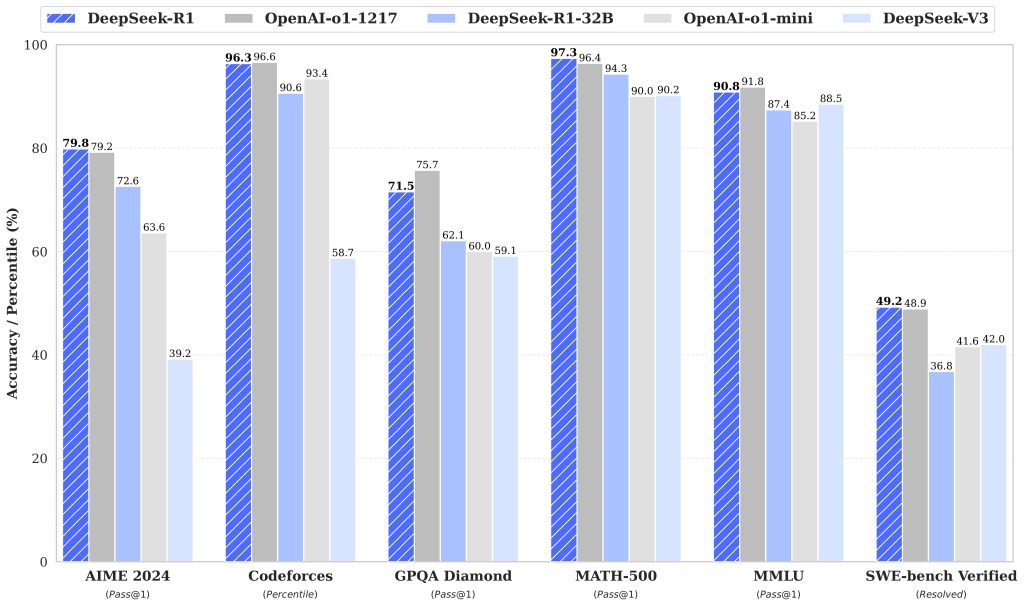

DeepSeek R1 vs. OpenAI O1

As we delve deeper into the capabilities of DeepSeek R1, it’s crucial to understand how it stacks up against one of the industry’s leading models, OpenAI O1. This comparison not only highlights DeepSeek R1’s strengths but also sheds light on areas where it might need improvement.

One of the most striking differences between these models is their cost. DeepSeek R1 offers a significantly more affordable option, costing only 2% of what users would spend on OpenAI O1. This cost-effectiveness becomes even more apparent when we look at the specific pricing:

| Model | Input Cost (per million tokens) | Output Cost (per million tokens) |

|---|---|---|

| DeepSeek R1 | $0.55 | $2.19 |

| OpenAI O1 | $15.00 | $60.00 |

In terms of functionality, both models were put to the test using historical financial data of SPY investments. When it came to SQL query generation for data analysis, both DeepSeek R1 and OpenAI O1 demonstrated high accuracy. However, R1 showed an edge in cost-efficiency, sometimes providing more insightful answers, such as including ratios for better comparisons.

Both models excelled in generating algorithmic trading strategies. Notably, DeepSeek R1’s strategies showed promising results, outperforming the S&P 500 and maintaining superior Sharpe and Sortino ratios compared to the market. This demonstrates R1’s potential as a powerful tool for financial analysis and strategy development.

However, it’s important to note that DeepSeek R1 isn’t without its challenges. The model occasionally generated invalid SQL queries and experienced timeouts. These issues were often mitigated by R1’s self-correcting logic, but they highlight areas where the model could be improved to match the consistency of more established competitors like OpenAI O1.

What next?

DeepSeek R1 has emerged as a breakthrough in the realm of financial analysis and AI modeling. DeepSeek R1 offers a revolutionary financial analysis tool that is open-source and affordable, making it accessible for wide audiences, including non-paying users. This accessibility, combined with its impressive performance in areas like algorithmic trading and complex reasoning, positions DeepSeek R1 as a formidable player in the AI landscape.

Q: How might DeepSeek R1 evolve in the future?

A: As an open-source model, DeepSeek R1 has the potential for continuous improvement through community contributions. We may see enhanced performance, expanded capabilities, and even more specialized versions tailored for specific industries or tasks.

Q: What opportunities does DeepSeek R1 present for developers?

A: Developers have the unique opportunity to explore, modify, and build upon the DeepSeek R1 model. This openness allows for innovation in AI applications, potentially leading to breakthroughs in fields ranging from finance to scientific research.

In conclusion, we encourage both seasoned AI practitioners and newcomers to explore DeepSeek models and contribute to their open-source development. The democratization of advanced AI tools like DeepSeek R1 opens up exciting possibilities for innovation and progress in the field of artificial intelligence.