Gemini Embedding 2: Google’s First Multimodal Embedding Model

Gemini Embedding 2: Features, Benchmarks, Pricing & How to Get Started

Last week, Google released Gemini Embedding 2, the first natively multimodal embedding model built on the Gemini architecture. If you work with embeddings in any capacity, this deserves your attention. It has the potential to significantly disrupt the multi-model embedding pipelines that most teams rely on today.

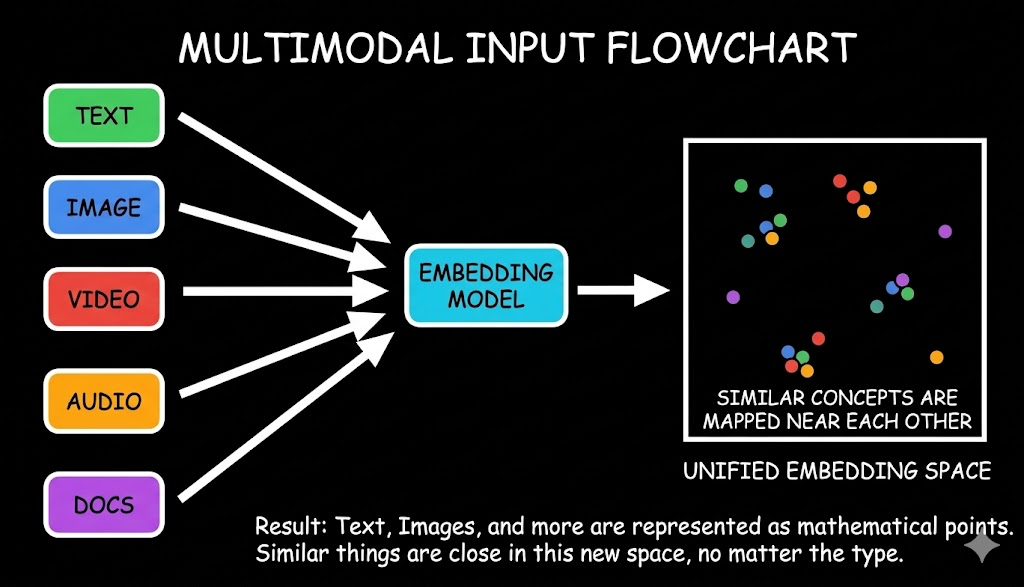

Until now, the flagship embedding models from OpenAI, Cohere, and Voyage were primarily text-based. A few multimodal options existed — CLIP for image-text alignment, Voyage Multimodal 3.5 for images and video — but none covered the full spectrum of modalities in a single, unified vector space. Audio typically had to be transcribed before embedding. Video required frame extraction combined with separate transcript embeddings. Images lived in their own vector space entirely.

Gemini Embedding 2 changes that equation. One model, one API call, one vector space.

Let’s dig into what’s new.

What Is Gemini Embedding 2?

Gemini Embedding 2 (gemini-embedding-2-preview) is Google DeepMind’s first fully multimodal embedding model. It takes text, images, video clips, audio recordings, and PDF documents and converts all of them into vectors that live in the same shared semantic space.

Unlike earlier multimodal approaches such as CLIP, which pair a vision encoder with a text encoder and align them with contrastive learning at the end, Gemini Embedding 2 is built on the Gemini foundation model itself. This means it inherits deep cross-modal understanding from the ground up.

Image generated using Nano Banana

Practical example: Imagine you’re building a Learning Management System (LMS) with video tutorials, audio lectures, and written guides. With Gemini Embedding 2, you can store embeddings for all of this content in a single vector space and build a RAG-based chatbot that retrieves relevant chunks from videos, audio, and documents alike. Previously, this required a multi-layered embedding pipeline — and even then, it only captured transcripts, missing the visual context of a video or the tone of a speaker’s voice.

The model uses Matryoshka Representation Learning, which means you don’t have to use all 3072 dimensions if you don’t need them. You can scale down to 1536 or 768 and still get usable results.

Matryoshka Representation Learning (MRL) is a technique for training embedding models so that the learned representations are useful not only at their full dimensionality but also at various smaller dimensions — nested inside one another like Russian matryoshka dolls. During training, the loss function is computed not just on the full embedding but also on multiple prefixes of the embedding vector. This encourages the model to pack the most important information into the earliest dimensions, with each subsequent dimension adding finer-grained detail — a coarse-to-fine structure.

Supported Modalities & Input Limits

The model accepts five types of input, all mapped into the same embedding space:

| Modality | Input Limit | Formats |

|---|---|---|

| Text | Up to 8,192 tokens | Plain text |

| Images | Up to 6 images per request | PNG, JPEG |

| Video | Up to 120 seconds | MP4, MOV |

| Audio | Up to 80 seconds (native, no transcription) | MP3, WAV |

| PDFs | Directly embedded | PDF documents |

How It Compares to Existing Models

TLDR: Google’s new Gemini Embedding 2 model tops its competitors (its own predecessor, Amazon Nova 2, and Voyage Multimodal 3.5) across nearly every modality: text, image, video, and speech. It leads most convincingly in video retrieval and image-text matching. The only benchmark where it doesn’t win is document retrieval, where Voyage edges slightly ahead. Speech-text retrieval is a category Gemini owns alone since no competitor even supports it.

Google published benchmark comparisons against its own legacy models, Amazon Nova 2 Multimodal Embeddings, and Voyage Multimodal 3.5. Here’s the full picture:

Text-Text

| Metric | Gemini Embedding 2 | gemini-embedding-001 | Amazon Nova 2 | Voyage Multimodal 3.5 |

|---|---|---|---|---|

| MTEB Multilingual (Mean Task) | 69.9 | 68.4 | 63.8** | 58.5*** |

| MTEB Code (Mean Task) | 84.0 | 76.0 | * | * |

Gemini Embedding 2 leads on multilingual text by a comfortable margin and jumps 8 points over its own predecessor on code retrieval. Neither Amazon Nova 2 nor Voyage report code scores.

Text-Image

| Metric | Gemini Embedding 2 | multimodalembedding@001 | Amazon Nova 2 | Voyage Multimodal 3.5 |

|---|---|---|---|---|

| TextCaps (recall@1) | 89.6 | 74.0 | 76.0 | 79.4 |

| Docci (recall@1) | 93.4 | — | 84.0 | 83.8 |

A clear lead in text-to-image retrieval — over 9 points ahead of the nearest competitor on both benchmarks.

Image-Text

| Metric | Gemini Embedding 2 | multimodalembedding@001 | Amazon Nova 2 | Voyage Multimodal 3.5 |

|---|---|---|---|---|

| TextCaps (recall@1) | 97.4 | 88.1 | 88.9 | 88.6 |

| Docci (recall@1) | 91.3 | — | 76.5 | 77.4 |

Image-to-text retrieval shows the widest gaps — nearly 15 points ahead of Amazon Nova 2 on Docci.

Text-Document

| Metric | Gemini Embedding 2 | multimodalembedding@001 | Amazon Nova 2 | Voyage Multimodal 3.5 |

|---|---|---|---|---|

| ViDoRe v2 (ndcg@10) | 64.9 | 28.9 | 60.6 | 65.5** |

The one benchmark where Voyage Multimodal 3.5 edges ahead (self-reported). Document retrieval is close between the top models.

Text-Video

| Metric | Gemini Embedding 2 | multimodalembedding@001 | Amazon Nova 2 | Voyage Multimodal 3.5 |

|---|---|---|---|---|

| Vatex (ndcg@10) | 68.8 | 54.9 | 60.3 | 55.2 |

| MSR-VTT (ndcg@10) | 68.0 | 57.9 | 67.0 | 63.0** |

| Youcook2 (ndcg@10) | 52.5 | 34.9 | 34.7 | 31.4** |

Video retrieval is where Gemini Embedding 2 pulls furthest ahead — over 17 points above Voyage on Youcook2 and over 13 points on Vatex.

Speech-Text

| Metric | Gemini Embedding 2 |

|---|---|

| MSEB (mrr@10) | 73.9 |

| MSEB ASR**** (mrr@10) | 70.4 |

Speech-text retrieval is entirely uncontested — neither Amazon nor Voyage support it. This is a category Gemini Embedding 2 owns outright.

– score not available ** self-reported *** voyage-3.5 **** ASR model converts audio queries to text

Pricing

The model is currently free during public preview. Once on the paid tier, here’s the breakdown:

| Free Tier | Paid Tier (per 1M tokens) | |

|---|---|---|

| Text input | Free of charge | $0.20 |

| Image input | Free of charge | $0.45 ($0.00012 per image) |

| Audio input | Free of charge | $6.50 ($0.00016 per second) |

| Video input | Free of charge | $12.00 ($0.00079 per frame) |

| Used to improve Google’s products | Yes | No |

Getting Started

The model is available now in public preview via the Gemini API and Vertex AI under the model ID gemini-embedding-2-preview. It integrates with LangChain, LlamaIndex, Haystack, Weaviate, Qdrant, ChromaDB, and Vector Search.

from google import genai

from google.genai import types

# For Vertex AI:

# PROJECT_ID='<add_here>'

# client = genai.Client(vertexai=True, project=PROJECT_ID, location='us-central1')

client = genai.Client()

with open("example.png", "rb") as f:

image_bytes = f.read()

with open("sample.mp3", "rb") as f:

audio_bytes = f.read()

# Embed text, image, and audio

result = client.models.embed_content(

model="gemini-embedding-2-preview",

contents=[

"What is the meaning of life?",

types.Part.from_bytes(

data=image_bytes,

mime_type="image/png",

),

types.Part.from_bytes(

data=audio_bytes,

mime_type="audio/mpeg",

),

],

)

print(result.embeddings)

Try it out here!

We’ve built a demo app where you can test out the multimodal retrieval performance of gemini-embedding-2.

You can get the API Key by logging into aistudio.google.com.

Limitations to Watch

- The model is still in public preview (the “preview” tag means pricing and behavior may change before GA).

- Video input is capped at 120 seconds and audio at 80 seconds.

- Performance on niche domains like financial QA is weaker; evaluate against your specific data before committing.

- For pure text pipelines with no multimodal plans, the cost premium over text-only models may not be justified.

The Bottom Line

Gemini Embedding 2 isn’t just an incremental improvement, it’s a category shift. For teams building multimodal RAG systems, semantic search across media types, or unified knowledge bases, it collapses what used to be a multi-model, multi-pipeline problem into a single API call. If your data spans more than just text, this is the model to evaluate first.

Building multimodal RAG shouldn’t mean stitching together embedding models, vector databases, and retrieval logic from scratch. If you want a managed RAG-as-a-Service solution that handles the embedding pipeline for you, sign up for the free trial at Cody and start building today.